|

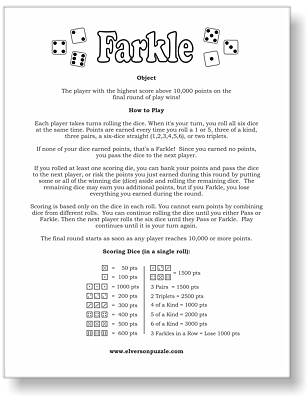

For each action $a \in A(s)$, there is a set of transition probabilities $P_a(s, s')$ defining the probability of transitioning from $s$ to each state $s' \in S$ given that action $a$ was taken while in state $s$. For each state $s \in S$, there are a set of actions that may be taken $A(s)$. As a courtesy, I've included mouse-hover popups where each such non-standard variable name is introduced (highlighted in blue) explaining the motivation for the change.Ī Markov Decision Process (MDP) 2 is a system having a finite set of states $S$. My aim is to use variable names most meaningful in the final value-iteration equation. Note: those familiar with MDPs may find the non-standard variable names I use to present this standard subject distracting. Markov Decision Processes and Value Iteration Three-pair and 1500 points - you can't use the ones both ways for 1700 (If you roll two 1s, two 2s,Īnd two 3s you can either count the two 1s for 200 or use all six dice for

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed